Portfolio

Here you’ll find examples of some of my most recent work. For a complete list of all my roles and assignments, along with my primary deliverables, check out the Engagements page.

Product Documentation/Information Architecture

Platform: DeveloperHub.io

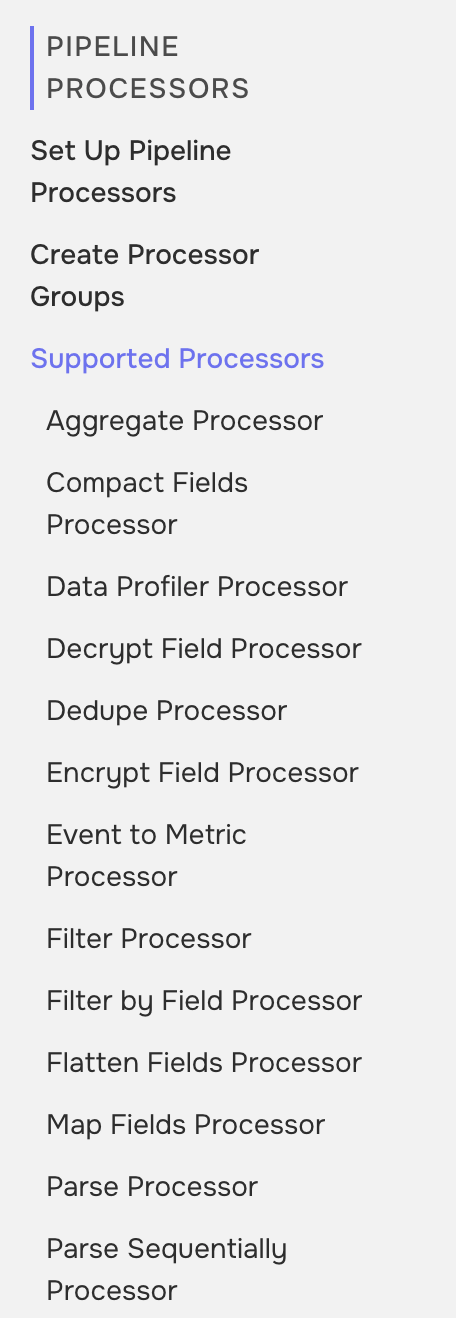

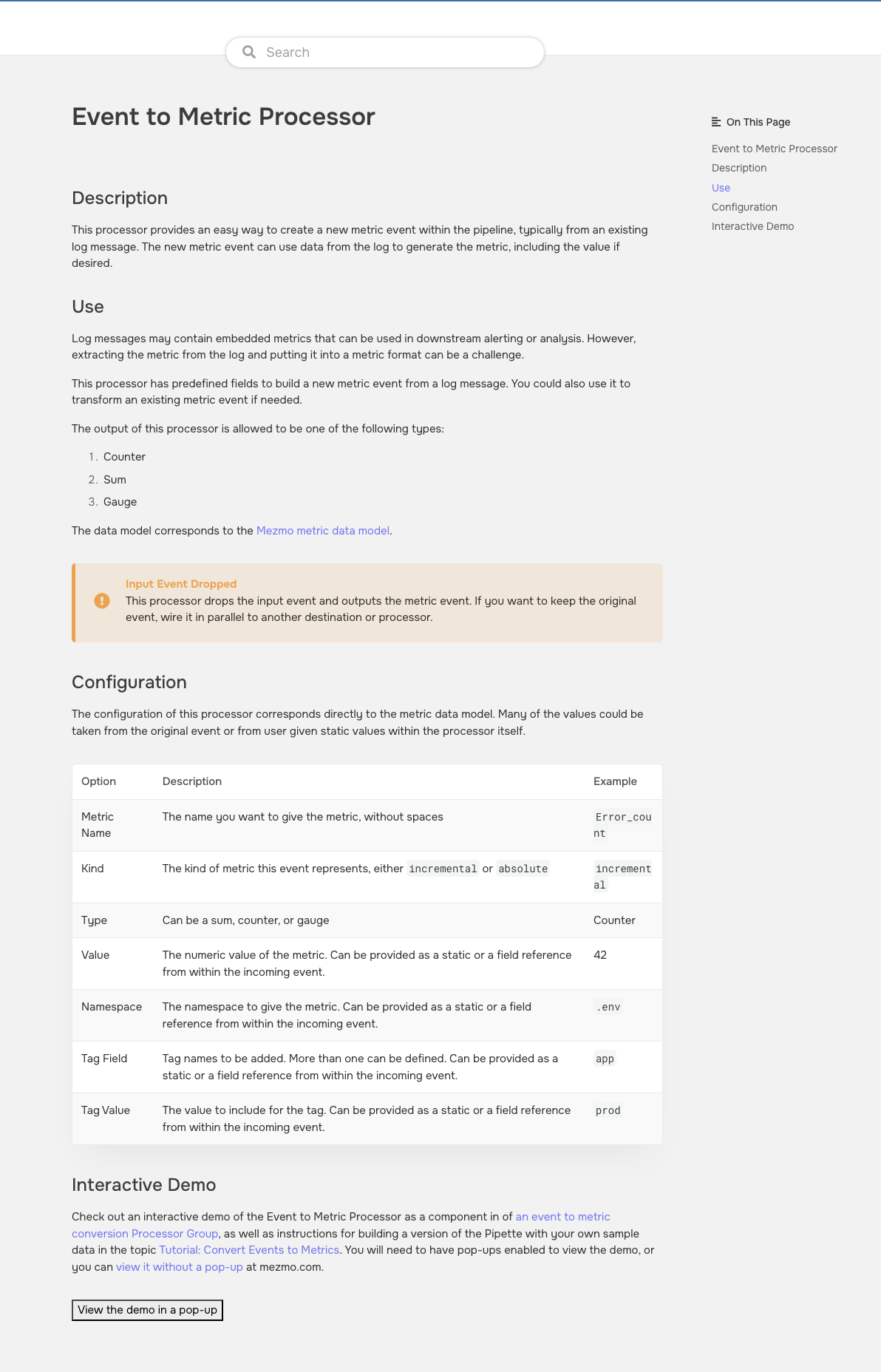

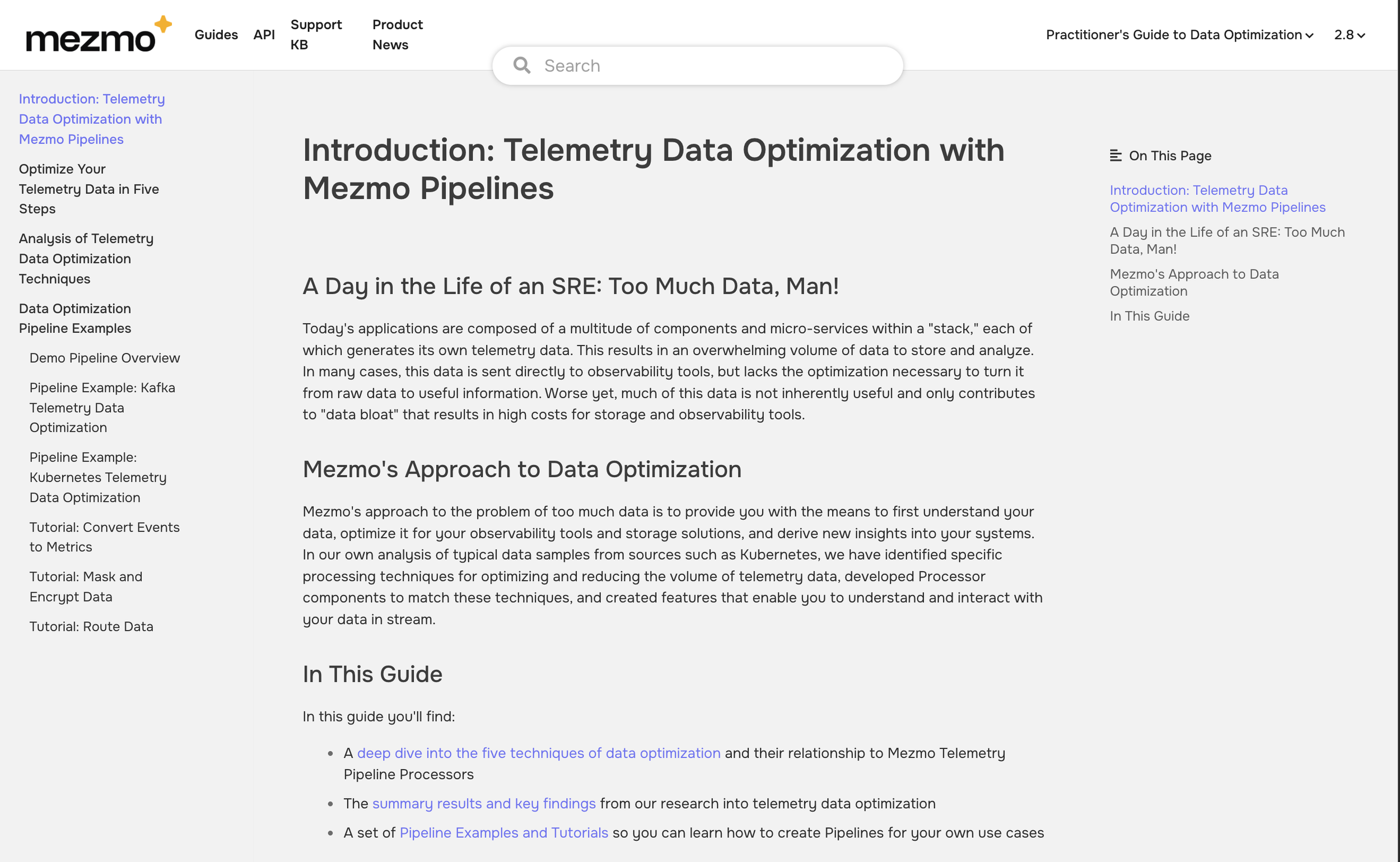

One of my first assignments when I joined Mezmo was to architect and write the initial topics for a new product, Mezmo Telemetry Pipelines. Working with the lead Product Manager, I created a taxonomy based on the essential components of a pipeline - ingesting sources, processors, and destinations - and essential user-centric tasks including how to build a pipeline and how to monitor data and set alerts. Realizing that the architecture needed to be easily expanded at a rapid pace, I created DITA-based templates that could be used by Engineering or Product Management to complete information about the components as they were ready to be released, and then put through simple review workflow for publication. By agreeing on a standard naming convention for component topics, Engineering was able to auto-construct in-app links to the appropriate topics when the component moved to Beta and the topic was published. Each component section had a similar structure, with an an overview and basic set up topics, and then a list of supported components. This also enabled consistent SEO for components that prospects were likely to search for. A topic cluster linking strategy enables cross-indexing among related topics in all of the Mezmo guides, making them more effectively knowledge bases than book-centric guides. Updates are managed through a dedicated JIRA board.

The Table of Contents for the Mezmo Telemetry Pipelines product guide

Platform: DeveloperHub.io

Technical Marketing Collateral

Platform: Navattic

The section for Pipline Processsors

Instructional Guides and Tutorials

Having created the inititial docs set for Mezmo Telemetry Pipelines, the next step was to create instructional guides, with content that could also be incorporated into the 30 day free trial and onboarding experience to familiarize prospects with product features and workflows. As Customer Success and Product Marketing discovered, without solutions-focused technical content, the initial customer experience with the product was like being handed a box of Legos without any instructions.

An example of a Processor component topic

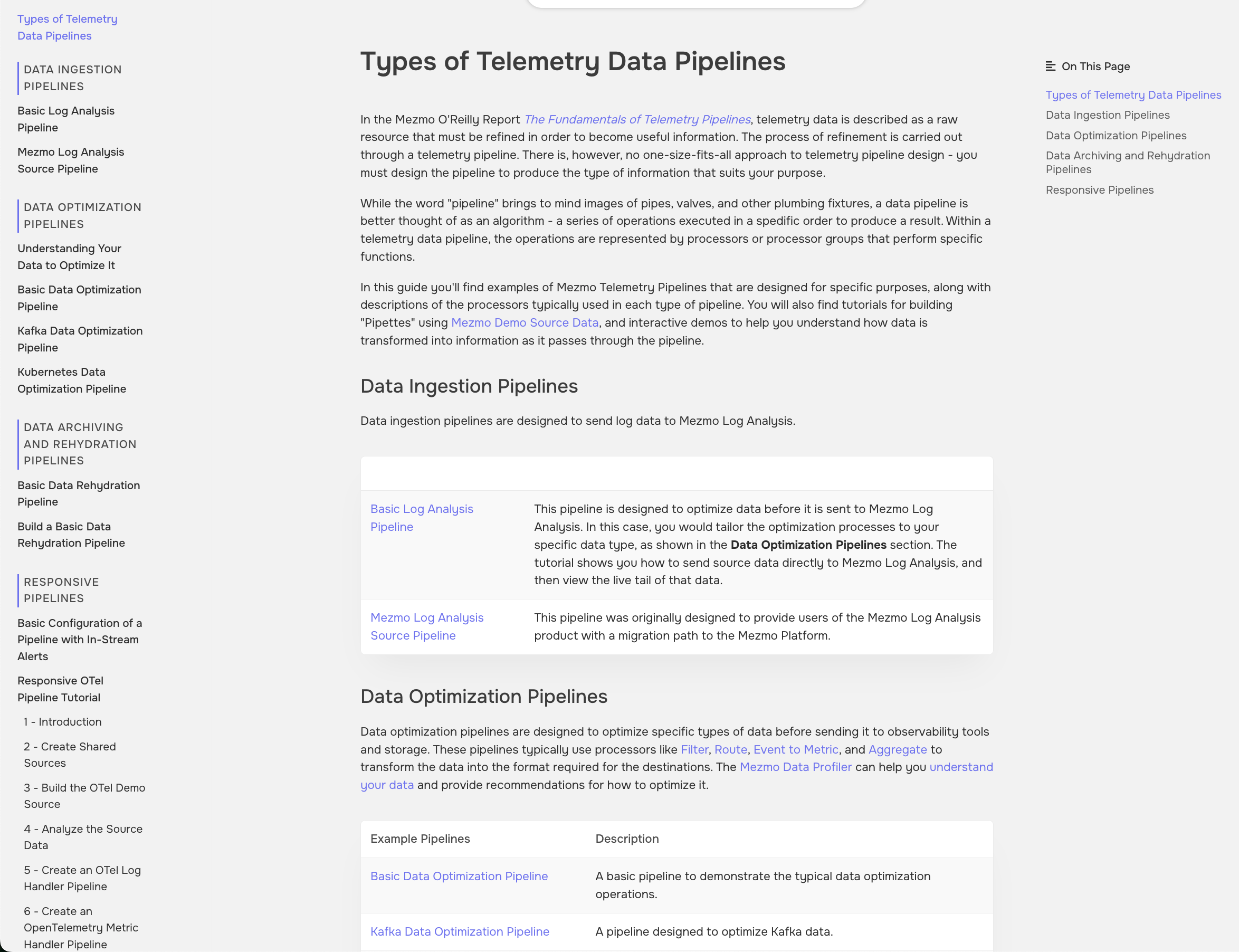

The Practitioner’s Guide to Data Optimization is based on the primary use case for Product Marketing and Sales Engineering, and incorporates both technical marketing collateral and instructional content. The Pipeline architecture content is based on demo pipelines that were included in the original version of the app and provide readers with a step-by-step guide to the data transformations with the pipelines. The tutorials focus on using in-app demo data and a drop destination to enable a reader to build a demo pipeline, with a call to action to reach out to technical services for help in setting up the pipeline with their own data.

The Guide to Telemetry Pipeline Architecture is based on the five primary use cases developed by Customer Success, and is intended to serve as top-of-funnel thought leadership content that will encourage prospects to sign up for a free trial and explore the use case that was most meaningful to them. Each section includes an overview of the type of pipeline, reference architectures, and tutorials that enable readers to build example pipelines and processor modules using in-app demo data and drop destinations.

Interactive Demos

An early challenge with the telemetry pipelines product was being able to illustrate how data is transformed as it passes through the processor chain. The original demo pipelines used by Sales Engineering presented long, branching chains that were difficult to follow, and often suffered from “demo gremlins” when using live data. Upon closer consideration, all pipelines were composed of multiple sets of processors in a modular configuration, which then became the basis of the module demos. Navattic enabled readers to go through and examine each processor, its configuration, and how data was transformed as it entered and exited the processor, without needing to send live data through the pipeline. When embedded in tutorial and processor topics, the demos provided the reader with a map and reference guide to the processor configuration. These were also loaded onto tablets for use at events and conventions by the Mezmo Customer Success team to demonstrate the basic capabilities of the product. One customer testified that he was unable to get started with the product until he found the demos, and then he was able to build a sample pipeline that helped him understand its capabilities and converted him from a free trial to paid account.

Written in collaboration with Mezmzo’s Chief Marketing Officer, this white paper is intended to demonstrate thought leadership in telemetry data management and its connection to specific features of the Mezmo Telemetry Pipelines product. Working from the tagline “Understand Optimize Respond.” it links each of the activities to a major component and the best practices workflow of the product.

For the second revised edition, I wrote a new first chapter incorporating the Understand Optimize Respond concept, and expanding on the metaphor “data is the new oil.”

Ghostwriting for Technical Subject Matter

Ethical AI, A Handbook for Managers and Developers

Chapter 1, “Ethical Foundations”

I was inititally approached by a friend and former colleague, who was the Chief Product Officer of an AI startup, about copyediting a book on ethical AI originally drafted by the company’s CTO. This project eventually involved writing four new versions of the original seven chapters, and substantial developmental editing of the remaining three.

The original first chapter focused on a history of the concepts of moral philosophy and their ethical application, but seemed to largely be a generative AI summary of the Wikipedia page for Moral Philosophy. Based on a mention of the short story collection I, Robot by Isaac Asimov in the original, and drawing upon my own extensive background in the history of cybernetics and philosopy, my original chapter discusses the limitations of “laws” for guiding AI behavior, and lays the foundation for subsequent chapters on the various dimensions of ethical AI.